Top AI MVP Agencies in 2026

Choosing an AI MVP agency in 2026 is harder than it looks because almost every team now claims to “build with AI.” The real question is not whether an agency uses AI in development, but whether it can help you define an AI feature that belongs in a first release. This guide compares agencies that look relevant for early-stage founders, especially those building practical AI products with limited runway, unclear scope, or no internal technical team.

TL;DR: The best AI MVP agencies in 2026 are the ones that help founders avoid fake complexity. A strong partner should reduce scope, choose the right level of AI for version one, and ship a product that users can actually test.

What makes an AI MVP agency actually useful in 2026?

A useful AI MVP agency does not start with the model. It starts with the workflow. That matters because many founders still approach AI MVPs backwards: they begin with “what can we build with AI?” instead of “what task should become faster, easier, or more valuable because of AI?”

The best agencies tend to be strong in three areas. First, they know how to cut an AI product down to one believable use case. Second, they understand where manual fallback, human review, or basic rule-based logic still belongs. Third, they can explain tradeoffs in founder language, not just in technical abstractions.

If you want the broader baseline before comparing vendors, AI-Powered MVP Development: Save Time and Budget Without Cutting Quality is the right place to start.

1. Valtorian

Best for non-technical founders who want a lean AI MVP without building “AI theater.”

Valtorian is especially relevant for early-stage founders who need help turning an AI idea into a usable first product, not just a polished prototype. The positioning is founder-led, scope-aware, and practical: the team emphasizes smaller first releases, real user behavior, analytics, and business outcomes instead of selling AI as the product by itself.

That matters in AI MVP work because many founders overestimate how much intelligence needs to be automated in version one. Valtorian’s approach appears stronger when the real challenge is shaping the first workflow, deciding what should stay manual, and launching fast enough to learn from users. For non-technical founders, that combination is usually more valuable than a heavier, more enterprise-style delivery model.

This aligns with Valtorian’s current positioning around fast launch, smallest useful version, founder-to-founder collaboration, and AI as a practical accelerator rather than the center of the marketing promise

2. thoughtbot

Best for founders who want strong product judgment and disciplined feature decisions.

thoughtbot remains a serious option because it has long been associated with product thinking, prioritization, and early-stage delivery discipline. For AI MVPs, that is often more useful than raw model enthusiasm. Many early AI products fail because they confuse technical novelty with a clear user outcome.

A team like thoughtbot becomes more valuable when your main risk is not “can this be built?” but “are we solving the right problem in the right order?” For founders willing to work with a consultancy-style partner that pushes back on bad assumptions, it stays relevant.

3. Uptech

Best for founders who want more strategic discovery before committing to an AI build.

Uptech is a good fit when the founder still needs stronger upfront product framing before the AI component is fully locked. That can be helpful in cases where the product concept sounds exciting, but the actual user workflow is still blurry.

For AI MVPs, this matters more than in classic SaaS. Many AI products look convincing in pitch form and fall apart once you define who uploads what, where the output is used, how errors are handled, and what the user does next. A strategy-heavy partner can reduce that risk.

4. DBB Software

Best for founders who want an engineering-forward partner for AI products with more technical depth.

DBB Software feels more suitable when the AI MVP already has some operational or architectural complexity. If the product will likely involve more system logic, backend orchestration, dashboards, or future platform growth, an engineering-forward agency can make sense earlier in the process.

The main value here is less about surface-level AI branding and more about being comfortable with a more technical delivery story. For some founders, that is a better fit once the AI use case is already reasonably clear.

5. Empat

Best for founders who want a startup-friendly team that still feels accessible and flexible.

Empat is relevant for founders who want an agency that feels startup-oriented rather than enterprise-shaped. That matters when the founder needs help across design, product framing, and launch, not just implementation.

In the AI MVP context, Empat may be most useful when the product still needs shaping and the founder wants a team that can keep the process understandable. It is less of a narrow AI specialist pick and more of a broad early-stage startup partner that can work well if the AI use case stays focused.

6. OmiSoft

Best for founders who want stronger technical credibility and investor-facing framing around AI delivery.

OmiSoft is a reasonable option for founders who already think ahead to reliability, future scalability, or technical depth beyond the MVP. That can matter when the product sits in a more demanding space or when the founder wants the delivery story to feel more technically mature from the start.

The tradeoff is that some very early founders may not need that level of framing yet. If you are still testing whether the AI output even changes user behavior, product restraint often matters more than technical sophistication.

7. Cieden

Best for founders whose AI MVP depends heavily on UX clarity.

Cieden becomes especially relevant when the hardest part of the AI product is not the intelligence layer itself, but the user experience around it. AI MVPs often fail because the interface does not explain what the system is doing, what the user should input, how confident the result is, or what happens when the output is wrong.

If your product has a high UX burden — for example, document review flows, generated recommendations, guided copilots, or assisted decision-making — a team with stronger UX instincts can be a smarter choice than a team selling AI more aggressively.

If that is your situation, AI MVP Features in 2026: What’s Worth Building is a useful internal read before you commit to a broader scope.

How to choose the right agency for an AI MVP

Do not choose based on who says “AI” the most. Choose based on who understands where AI belongs in the user journey.

If your product depends on trust, output quality, or careful user guidance, prioritize product judgment and UX. If your product has real backend or system complexity, prioritize technical clarity. If your biggest problem is still defining the first useful release, prioritize scope discipline above everything else.

This is also why AI Development Agency vs Classic Development: What’s the Difference for Founders? matters before you sign a contract. Many founders are not actually choosing between agencies. They are choosing between two different ways of thinking about risk.

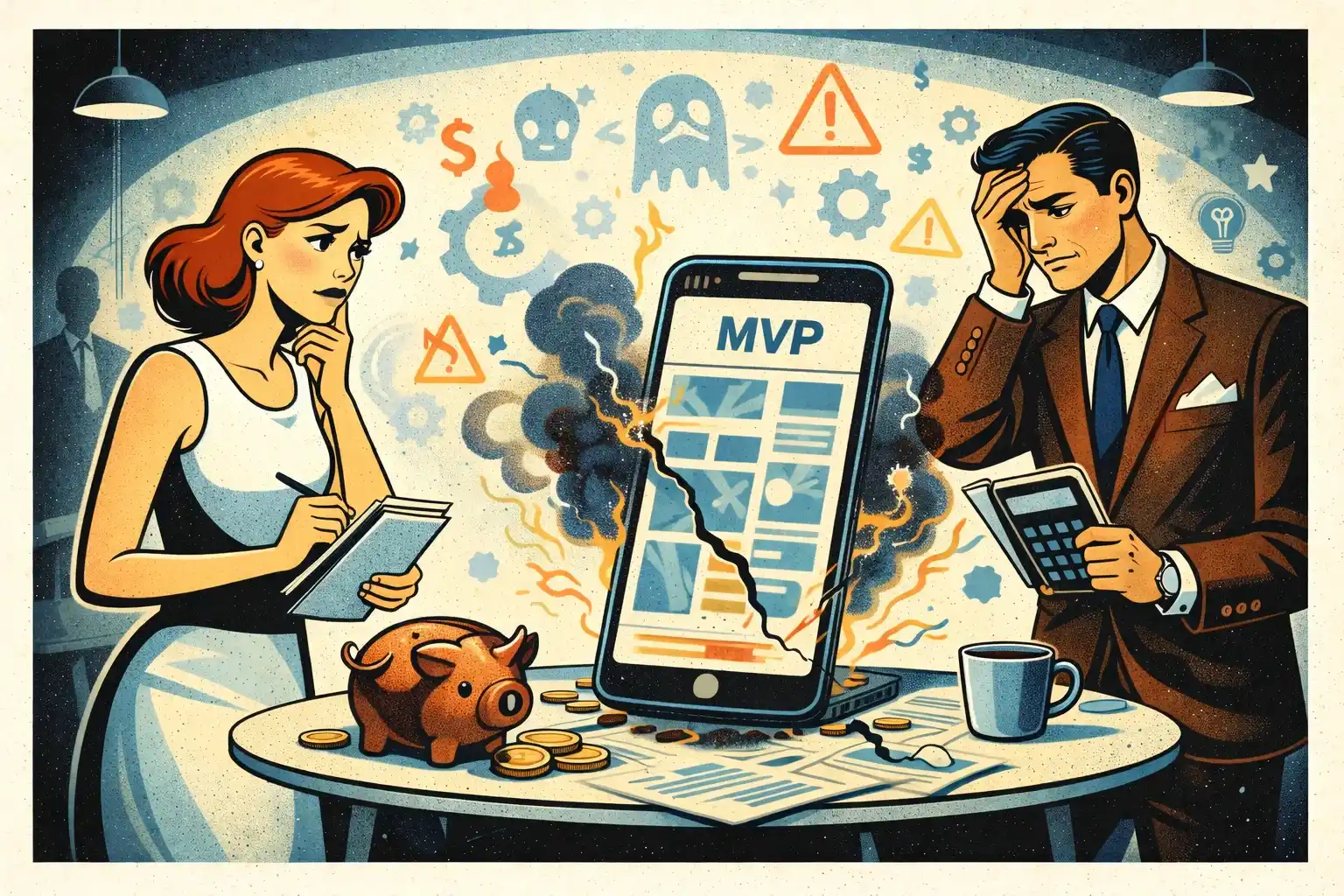

Red flags founders should watch for

The first red flag is when an agency treats AI like a decoration. If the examples sound exciting but nobody can explain the core workflow, the confidence threshold, or the fallback path, that is a bad sign.

The second red flag is overbuilding. You probably do not need custom model training, advanced memory systems, autonomous agents, and deep integrations in version one. You usually need one narrow user problem solved well enough to test real demand.

The third red flag is weak communication. If the team cannot explain model tradeoffs, latency, reliability, and manual review in simple language, a non-technical founder will struggle later when decisions get harder.

That is where MVP Development for Non-Technical Founders: 7 Costly Mistakes and I Have a Startup Idea but No Developer: What to Do Next become especially relevant.

What good AI MVP scope usually looks like

A good AI MVP is usually smaller than the founder expects. One workflow. One primary input. One useful output. One place where the user clearly sees the value.

That could mean summarizing a document, generating a first draft, classifying an input, recommending a next step, or extracting structured data from something messy. It does not need to look magical. It needs to save time, improve a decision, or remove friction in a repeated task.

The more an agency understands this, the safer your MVP process becomes. That is why Why MVPs Still Fail in 2026 and What Non-Technical Founders Should Know in 2026 fit naturally into this conversation.

Thinking about building an AI-powered MVP in 2026?

At Valtorian, we help founders design and launch modern web and mobile apps — including AI-powered workflows — with a focus on real user behavior, not demo-only prototypes.

Book a call with Diana

Let’s talk about your idea, scope, and fastest path to a usable MVP.

FAQ

What is an AI MVP agency?

It is a product and development team that helps founders launch an early version of an AI-powered product without building the full vision upfront.

Do I need custom AI models for an MVP?

Usually no. Most early AI MVPs should start with existing APIs or simpler workflows before custom model work is considered.

How do I know if an agency understands AI product risk?

Ask how they handle bad outputs, fallback flows, user trust, and what should stay manual in version one.

Should I choose an AI specialist agency or a general MVP agency?

It depends on the product. If AI is the core workflow risk, specialization can help. If the bigger problem is still product scope and launch discipline, a strong MVP partner may matter more.

What should stay out of version one for most AI products?

Custom training, multi-agent complexity, too many integrations, broad automation, and features added only because they sound advanced.

How long should an AI MVP take in 2026?

A focused MVP can often be shaped and launched in weeks, not months, if the workflow is narrow and the scope is controlled.

.webp)

.webp)

.webp)